Quality/GMPs

Latest News

Latest Videos

More News

Abzena’s Dr. Jeffrey C. Mocny and Cellares’ Anna McMahon discuss how biopharmaceutical risk-based standards accelerate innovation and speed-to-market by leveraging data for better patient outcomes and safety.

The approval of zenocutuzumab-zbco for NRG1 fusion-positive cholangiocarcinoma expands precision oncology options for patients with rare molecularly defined cancers.

FDA’s extended review of a subcutaneous formulation of lecanemab highlights ongoing regulatory evaluation of alternative anti-amyloid delivery approaches for early Alzheimer’s disease.

Data from a phase 3 study show statistically significant improvements in proptosis and diplopia, along with favorable tolerability, which support regulatory advancement of elegrobart, a subcutaneous IGF-1R–targeting therapy for chronic autoimmune disease.

MRM Health’s MH002 gains FDA fast track, advancing microbiome-based therapy targeting immune modulation in ulcerative colitis patients.

BeOne Medicines’ tislelizumab and zanidatamab show improved survival in HER2+ GEA, highlighting combination immunotherapy advances in gastroesophageal cancer.

Henlius and Organon have secured approval in Europe for its pertuzumab (POHERDY) biosimilar, expanding biosimilar access for HER2-positive breast cancer treatment across oncology settings.

FDA has granted priority review to Johnson & Johnson's nipocalimab for warm autoimmune hemolytic anemia, a rare disorder with no approved US therapies.

CHMP has supported intrathecal onasemnogene abeparvovec, Novartis' gene therapy for 5q SMA in patients aged 2 years and older in the EU.

ADC cleaning validation requires risk-based strategies to manage degradation and ensure safe limits for highly potent, dual-modality therapeutics, says Paul Lopolito, STERIS’ director of Technical Services, at INTERPHEX 2026.

Savara’s inhaled GM-CSF therapy for autoimmune PAP faces delayed review, potentially postponing access to a treatment targeting impaired lung function

FDA approval of a denosumab (Prolia) biosimilar and dual filing acceptances by FDA and EMA for an omalizumab (Xolair) biosimilar candidate from Teva signal increasing competition in the biosimilar markets as well as expanded access for allergy and immunology patients.

The approval introduces a one-time gene therapy for LAD-I that restores immune function and addresses the underlying cause of a life-threatening pediatric disease.

FDA’s approval of a high-dose nusinersen from Biogen improves SMA treatment durability and supports evolving therapy sequencing strategies in a competitive neuromuscular market.

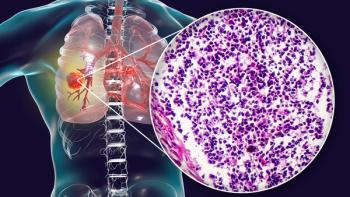

GSK’s B7-H3-targeted ADC has shown durable responses in SCLC, supporting regulatory momentum and advancing targeted approaches for high-unmet-need lung cancers.

Data from a Phase III trial showed durable survival benefit with PD-L1 blockade plus chemotherapy, reinforcing the use of immunotherapy in curative-intent GI cancer settings.

Regulatory acceptance of tildrakizumab's sBLA signals a potential expansion of IL-23 inhibition into joint disease, an area in which treatment gaps persist for a substantial share of psoriatic disease patients.

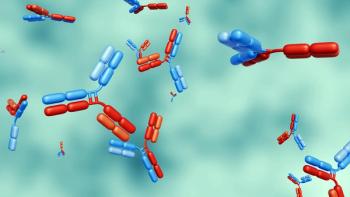

The companies’ new immunology-driven mAb, which targets an immune-evasion pathway in myeloproliferative neoplasms, is advancing toward first-in-human trials.

Data from the MajesTEC-9 Phase III trial shows teclistamab as monotherapy improves survival, indicating a shift in multiple myeloma treatment.

Phase III trial results show that trastuzumab deruxtecan reduced invasive recurrence or death 53% in patients with residual HER2-positive disease after neoadjuvant therapy.

China’s approval of Sciwind’s ecnoglutide for weight management, alongside the company’s Pfizer commercialization deal, intensifies competition in the global GLP-1 market.

Johnson & Johnson reports encouraging Phase 1b data for its bispecific antibody pasritamig in combination with docetaxel in metastatic castration-resistant prostate cancer, showing strong PSA responses and manageable safety

Siegfried Schmitt, PhD, vice president, Technical at Parexel, notes how it is necessary to search beyond the term “data integrity” to stay abreast of developments in this field.

FDA’s priority review designation of Regeneron Pharmaceuticals’ garetosmab underscores Activin A inhibition as a potential disease-modifying strategy for genetically driven ossification disorders.

FDA’s breakthrough therapy designation for Johnson & Johnson’s co-formulated bispecific antibody therapy validates dual EGFR/MET targeting in HPV-negative head and neck cancer.